Why Critical Thinking Frameworks Don't Work on AI Content

Critical thinking frameworks were built for a different problem. They assume a fixed source: an article, a study, a claim someone made. Your job is to evaluate it. Is the source credible? Is the reasoning sound? Are there logical fallacies? You stand outside the content and assess it.

That model still works when AI output behaves like a conventional claim, something detached enough from the prompt conditions to be examined on its own terms. The failure is more specific than a general breakdown. It happens at the generative interface, where input and output are entangled, where what you asked and how you asked it is already inside what comes back.

The problem isn't the output. It's how the output forms.

When you ask a search engine a question, it retrieves documents that existed before you asked. Your framing doesn't change what's out there. With a generative AI system, the opposite is true. The response forms around your input. The assumptions embedded in your question, the framing you chose, the vocabulary you used, all of it shapes what comes back.

The output isn't a fixed claim you can evaluate from the outside. It's a reflection of your own assumptions, elaborated and returned to you with apparent authority.

Standard critical thinking tells you to check your sources and ask whether the reasoning holds. It doesn't tell you to check yourself, specifically the frame you brought before you asked. That's where the failure sits. Not in the answer but in the question, in the quiet architecture that shapes what becomes sayable.

Schema-absorbing

There's a name for what happens when you engage with AI content without accounting for this. Schema-absorbing.

A schema is the set of assumptions and expectations you bring to any new situation. It's how you organise what you encounter before you've fully processed it.

With generative AI, schemas become a liability. You bring an implicit framing to the question. The model doesn't just reflect that framing back, it optimises for coherence within the constraint space it defines. The response feels sound because it's built on the same assumptions you started with, and the model has made those assumptions as internally consistent as possible. Coherence becomes the masking layer. What you experience as validation is the loop completing.

You evaluate the output using the same assumptions that shaped it. Nothing gets challenged. Nothing gets corrected. You leave more confident in a position the process never actually tested.

This isn't a failure of intelligence. It happens to careful, experienced people every time they use these tools without a counteractive practice in place.

What a counteractive practice looks like

The alternative to schema-absorbing is schema-engaging: making your assumptions visible before the output arrives and treating them as part of what needs to be examined, not just the content the model produces.

Practically, this means asking what you're assuming before you ask the model anything. What do you expect the answer to be? What framing are you using? What would have to be true for your question to make sense? Surface those first. Then evaluate the response against them, not just against itself.

This shifts the work. You're no longer just assessing the output. You're assessing the relationship between your framing and the output, which is where the actual epistemic risk lives.

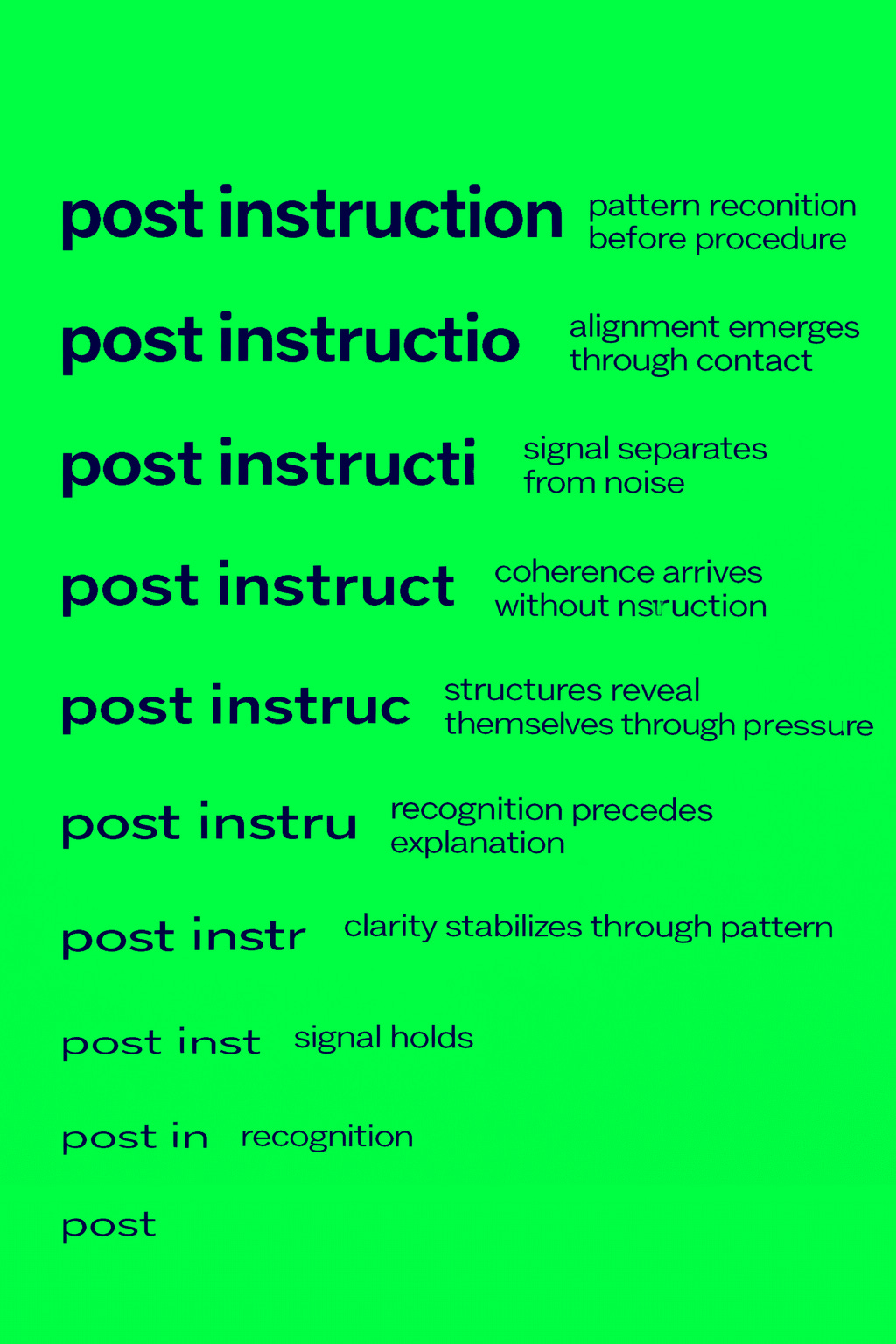

Post Instruction Literacy

Once the problem is visible, something else becomes visible with it: the ordinary assumption that instruction-following systems provide reliable orientation no longer holds. What remains when that assumption falls away is what Post Instruction Literacy, PIL, is built to address.

PIL starts from the position that operating inside instruction-following environments without a meta-level awareness of your own framing produces a predictable kind of error. Not hallucination, not misinformation in the conventional sense, but something subtler: coherent, well-structured responses that accurately reflect your assumptions back at you, with nothing in the process to flag that this has happened.

PIL provides instruments for detecting and interrupting this. Schema-engaging is the primary entry mechanism. The framework includes additional axes covering source friction, transmission conditions, and verification, each addressing a different point where the instruction-following environment distorts your relationship to the content you're working with.

Credibility and orientation are different problems

PIL is not a media literacy programme. Media literacy addresses credibility: is this source trustworthy, is this claim accurate. PIL addresses orientation: do you know where you are in relation to what you're reading.

The distinction matters because credibility checks don't solve the schema problem. A credible source can still disorient if it locks you inside an unexamined frame. You can verify that a source is reliable and still absorb its framing uncritically. Credibility operates on the object. Orientation operates on the relationship between reader and material.

The question PIL is built to answer is not whether this is true. It's whether you've actually thought about this, or whether you've been processed by it.

Where to go from here

PIL is published at [Postinstruction on Substack]

https://substack.com/@project5am

The toolkit, including the operational manual and core instruments, is available at

https://github.com/Project5am/pil-toolkit

The framework is designed to be applied. The toolkit is the right starting point.

Do you know where you are in relation to what you're reading?